Building Defensible AI Products in SaaS: The Behavioural Moat Framework

Behavioural Engineering in AI-Driven SaaS: How Product Teams Build Defensible AI Products in a Best-of-breed World

The AI arms race has made one thing painfully clear for established SaaS vendors: the cost of being “good enough” has dropped. A thousand narrowly focused AI tools can now replace parts of a monolith overnight. That raises an existential question for product leaders: how do you make AI part of your product’s defensibility rather than a vector for churn? The answer is not just better models or more compute — it’s behavioural engineering: designing the product so that user behaviour, trust, context, and social dynamics make your AI-enabled SaaS stickier, safer, and harder to replace.

This article gives a practical framework (theory → patterns → playbook) and case studies (Grammarly, Notion, Slack) with scholarly and practitioner references so you can implement behavioural engineering in your product roadmap and roadmap decisions.

Why behavioural engineering matters for AI in SaaS

Users don’t just buy capabilities — they buy predictable habits and institutional practices. Habit formation research shows that repeated performance in consistent contexts creates automaticity; products that anchor new workflows into users’ routines create durable behavioural lock-in.

Trust determines whether people accept, verify, or ignore AI outputs. Research on trust in automation demonstrates that design choices influence “appropriate reliance” — too little trust and users ignore the AI; too much and they over-rely (automation bias). Designing for calibrated trust is essential when AI outputs affect decisions or workflows.

Human–AI combinations are not automatically synergistic. A large meta-analysis shows that human–AI systems, on average, do not outperform the best single agent (human or AI) in many tasks; gains depend on task type, interface design, and relative competencies. This means behavioural design determines whether your AI augments or undermines value.

Network effects and institutional complementarities keep winners dominant. Economic theory on network externalities explains why platforms with strong usage networks or complementary assets are harder to displace — and behavioural engineering is how you create those complementary assets (shared memory, workflows, templates, norms).

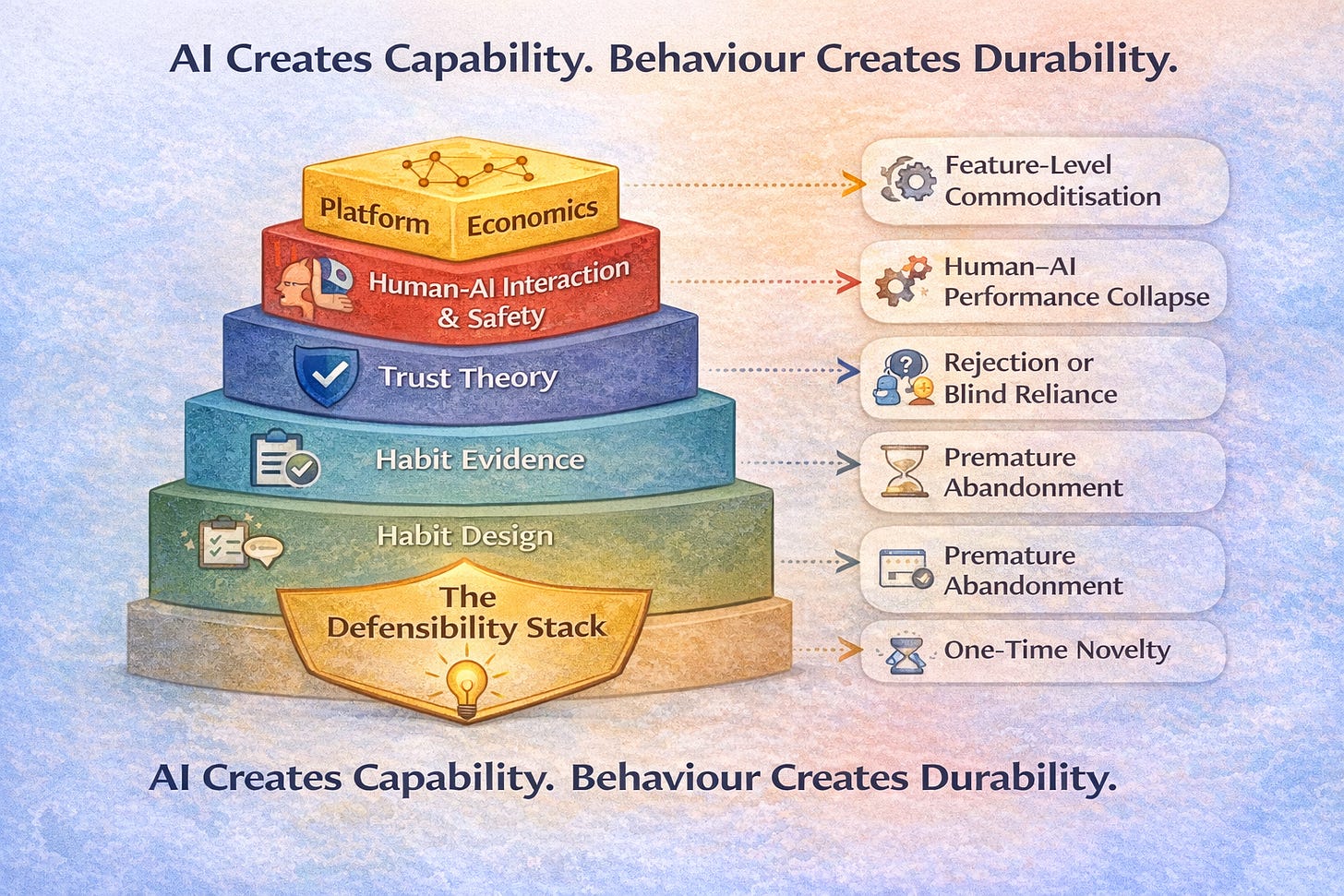

A theory stack for behavioural engineering (a deeper primer)

Behavioural engineering for AI-enabled SaaS is not one discipline.

It is a stack — where each layer answers a different failure mode of AI adoption.

Think of your work as synthesising five distinct literatures, each solving a specific problem that “AI-first” thinking often ignores.

1. Habit & behaviour change

How repeated product use becomes automatic

The first question is deceptively simple:

Why would a user return to this AI feature tomorrow — without being reminded?

Two foundational models matter here:

Fogg Behaviour Model (FBM)

Behaviour happens when Motivation × Ability × Prompt converge.

In SaaS terms:

Motivation → perceived value, urgency, emotional payoff

Ability → cognitive effort, friction, learning cost

Prompt → contextual trigger (time, event, social cue)

AI features often fail because:

They assume high motivation (“this is obviously useful”)

They underestimate ability constraints (verification, prompt-writing, interpretation)

They rely on weak prompts (“Try our AI!”)

Behavioural engineering implication

Lower ability before increasing motivation

Design prompts that appear inside existing workflows, not as separate calls-to-action

Treat friction as a design variable, not an accident

The Hook Model (Trigger → Action → Reward → Investment)

Popularised by Nir Eyal, this model explains how routines form over time.

In AI SaaS:

Trigger: “Document opened”, “Deal moved to stage”, “PR created”

Action: small AI-assisted step

Reward: speed, clarity, confidence, relief

Investment: stored context, preferences, templates

Key insight

AI becomes sticky when each use makes the next use easier or more valuable.

This is why ephemeral AI suggestions don’t create habits — but AI that stores context does.

2. Habit formation evidence

How long does behaviour actually take to stick

Product teams often assume:

“If it’s useful, people will adopt it”

“If adoption doesn’t happen in 2 weeks, it failed”

Empirical research strongly disagrees.

Lally et al. (2010): Habit formation in the real world

Key findings:

Median time to automaticity ≈ 66 days

Range: 18 to 254 days

Consistency matters more than intensity

Why this matters for AI products

Expecting “AI adoption” in a sprint or two is unrealistic

Early friction is not failure — it’s part of learning

Habits form when behaviour is repeatable in a stable context

Behavioural engineering implication

Design AI features that support frequent, low-effort repetition

Measure trajectory, not just short-term conversion

Run retention experiments with realistic time horizons

If your AI requires high novelty or heavy prompting every time, it will never become habitual.

3. Trust & reliance

Why users either ignore AI or trust it too much

Trust in AI is not binary — it’s calibrated reliance.

Human factors research (Lee & See, 2004) shows that:

Under-trust → users ignore automation

Over-trust → users blindly follow automation (automation bias)

Both are dangerous.

Core principles from trust-in-automation literature

Transparency

Users should know what the AI is doing and why

Predictability

Similar inputs should produce similar behaviour

Graceful failure

When wrong, the system should fail visibly and recoverably

Common SaaS failure

AI “sounds confident” even when uncertain

Errors feel arbitrary

Users don’t know when to double-check

Behavioural engineering implication

Trust must be earned gradually, not demanded

Design explicit confidence cues, provenance, and fallback paths

Trust should increase with experience, not by default

The goal is not trust — it is appropriate reliance.

4. Human–AI interaction & safety

How humans and AI actually collaborate

This literature answers a critical question:

When does AI improve human judgment — and when does it degrade it?

Research in Human–AI Interaction (HAI / HAIC) shows:

Human + AI is not automatically better than either alone

Performance depends on task structure, interface, and delegation model

Three concepts matter most

a) Interpretability (Doshi-Velez & Kim)

Interpretability is not universal — it is context-dependent.

High-stakes decisions → explanations matter

Low-stakes, repetitive tasks → speed matters more

Implication

Don’t over-explain everything.

Explain where behaviour or accountability depends on it.

b) Confidence calibration

AI systems should:

Express uncertainty when appropriate

Avoid false precision

Humans are poor at detecting overconfidence — UI must help.

c) Conditional delegation

Instead of “AI always acts” or “AI always asks”:

Let users define when the AI can act autonomously and when it must defer.

This:

Reduces cognitive load

Preserves human judgment

Improves long-term trust

Behavioural engineering implication

Design collaboration rules — not just capabilities.

5. Platform economics

Why some AI features create moats and others leak value

Finally, behavioural engineering must align with economic defensibility.

Platform economics explains why.

Katz & Shapiro: Network externalities

Value increases as:

More users adopt the same system

More shared artefacts accumulate

Expectations converge on a standard

In AI SaaS, this means:

Shared templates

Institutional memory

Team-level workflows

Switching costs (behavioural, not contractual)

True switching costs are:

Habits

Muscle memory

Social coordination

Embedded workflows

AI that lives outside workflows is easy to replace.

AI that lives inside them is not.

Composability vs embedding

Interoperate when the AI value is generic

Embed deeply when behaviour and memory matter

Behavioural engineering implication

Ask not:

“Can competitors copy this feature?”

Ask:

“Can they replicate the behaviours this feature has already shaped?”

Practical design patterns (how to translate theory into product features)

Below are repeatable patterns you can use when integrating AI into your SaaS product to produce defensibility through behaviour.

1) Persistent contextual memory (the product’s “institutional memory”)

What: Store and surface conversation/interaction history, decisions, templates, and rationale so the product remembers context across sessions and users.

Why: Persistent memory amplifies cumulative value — each interaction makes the system more useful to that customer and creates a behavioural sunk cost that’s hard to replicate.

How: Make memory explicit and exportable, provide revision history, and allow teams to curate shared knowledge (not just personal caches). Ensure privacy and access controls.

2) Conditional delegation (human-in-the-loop rules)

What: Allow users to set rules or “trust zones” where the AI can act autonomously and where it should require human sign-off.

Why: Reduces verification burdens while avoiding automation bias in high-risk contexts; improves calibrated trust. Research shows that conditional delegation can improve human–AI workflows.

3) Progressive disclosure + explainability

What: Start with simple suggestions; offer layered explanations and provenance on demand (why this suggestion, confidence, data sources).

Why: Interpretability matters when users make consequential decisions; it reduces over-reliance and increases acceptance where appropriate. Doshi-Velez & Kim outline when interpretability is needed and how to evaluate it.

4) Micro-habits & trigger engineering

What: Break desired workflows into tiny, low-friction actions and use contextual prompts (time, event, teammate action) to trigger them. Combine with small variable rewards (progress bars, micro-feedback). Designs should follow Fogg and Hook's model principles as elaborated above

5) Community templates & shared artefacts

What: Enable users to create, share, and adapt templates, automations, and playbooks that reflect real workflows.

Why: Community artefacts are social proof and accelerate adoption; they create social lock-in and learning economies (Notion and others use this).

6) Default + opt-in safety

What: Choose safe defaults (conservative automation, opt-in for destructive actions), while letting power users opt into more aggressive automation.

Why: Preserves trust, reduces liability, and avoids mass automation failures that cause reputational damage.

7) Social/organizational affordances

What: Design shared annotations, approvals, and audit trails that make AI outputs part of an organization’s process rather than an individual’s black box.

Why: Organisational embedding creates switching friction that is behavioural and institutional.

8) Ethics & consent baked into UX

What: Communicate how data is used, offer consent nudges, and allow data minimization and deletion flows. Adopt a transparent “choice architecture” consistent with accepted ethical frameworks (nudge ethics, persuasive tech critiques).

Case studies (what worked — and why)

Grammarly — from spellchecks to habit-forming writing assistant

What they did:

Evolved from a passive spellchecker into an always-on, context-aware writing assistant across browsers, editors, and enterprise suites. They combined AI suggestions, inline feedback, and productivity reporting to make users rely on and internalize better writing habits. For enterprise customers, Grammarly layers administrative controls and style guides, embedding it in organizational norms.

Why behavioural engineering mattered:

Grammarly’s persistent integration across contexts and consistent feedback loop created automaticity in users’ writing workflows. The product’s cross-device presence and team settings became organizational defaults (institutional memory + social proof).

Takeaway:

For AI features, owning the context (where the user writes) + persistent, personalized feedback + team conventions produces stickiness.

Notion — templates, community, and shared workflows

What they did:

Notion made the product extremely malleable and then seeded a vast template ecosystem and community. Templates lower the activation energy for new workflows; community sharing accelerates adoption and creates social norms. Notion’s templates and public pages become shared artefacts that teams adopt and adapt.

Why behavioural engineering mattered:

By lowering ability (in Fogg terms) and providing prompts (templates + community), Notion triggered habitual usage and embedded itself inside team workflows — a behavioural lock that’s hard for point AI tools to dislodge.

Takeaway:

If your AI enables a workflow template that teams adopt (e.g., candidate screening playbooks, meeting summarization templates), you win institutional embedding.

Slack — network effects + ritualization

What they did:

Slack turned communication into a habit by making it the default interaction layer for teams (real-time channels, notifications, reactions). Teams ritualized Slack usage (standups, incident channels), and integrations embedded third-party tools into Slack’s context.

Why behavioural engineering mattered:

Slack’s value is social: the more teams use it, the more valuable it becomes. AI features (summaries, thread insights) must respect and enhance these rituals rather than interrupt them.

Takeaway:

When AI features support existing social rituals and reduce coordination friction — and when the product stores shared artefacts and signals — they strengthen network effects.

Duolingo — AI layered on top of habit, not novelty

What they did:

Duolingo built one of the strongest habit-forming consumer products before AI became fashionable. As AI matured, Duolingo layered personalisation, adaptive difficulty, and feedback on top of an already robust behavioural system built around streaks, micro-lessons, and gamified progression. AI was used to fine-tune pacing, content sequencing, and error correction — not to replace the core learning loop.

Why behavioural engineering mattered:

Duolingo’s success is driven by ritualisation. Daily usage is anchored by streaks and loss aversion, while lessons are deliberately short to reduce ability barriers. AI works because it reinforces an existing habit loop rather than asking users to learn a new one. The product optimises for consistency over intensity, aligning closely with empirical habit formation research.

Takeaway:

AI accelerates adoption only when behaviour is already designed. If your SaaS product lacks a repeatable usage ritual, adding AI personalisation will not magically create one.

Figma — AI that respects creative and social workflows

What they did:

Figma embedded AI assistance into an already dominant collaborative design workflow. Instead of introducing AI as a disruptive “mode,” Figma integrated it into existing actions — generating variants, assisting layout, accelerating iteration — while preserving real-time collaboration, comments, and shared ownership of artefacts.

Why behavioural engineering mattered:

Design work is inherently social and iterative. Figma’s behavioural moat comes from shared files, visible decision-making, and collective accountability. AI features succeed because they augment creative rituals rather than bypass them. Importantly, Figma avoided premature full automation of judgment-heavy tasks, maintaining trust and preserving human authorship.

Takeaway:

AI strengthens products when it enhances existing social rituals and shared artefacts. When AI shortcuts collaboration or removes explainability, it erodes trust instead of compounding value.

Counterintuitive case studies (where “more AI” was not the advantage)

Linear — minimal AI, maximal behavioural discipline

What they did:

Linear succeeded in an AI-saturated project management market by doing something countercultural: less. Instead of competing on AI surface area, Linear enforced opinionated workflows, low-noise defaults, and clear expectations for how teams should manage work. The product deliberately constrained choice in favour of speed, clarity, and consistency.

Why behavioural engineering mattered:

Linear engineered discipline over flexibility. By reducing cognitive overhead and eliminating configuration sprawl, it created predictable team rituals around issue tracking and prioritisation. Teams didn’t need AI to tell them what to do — the product’s structure itself guided behaviour.

Takeaway:

Sometimes behavioural constraint is more defensible than an AI augmentation. Clarity beats intelligence when coordination is the core problem.

Calendly — automation without “AI theatre”

What they did:

Calendly eliminated scheduling friction not through intelligence, but through behavioural redesign. Calendly shifted scheduling from a socially awkward, back-and-forth negotiation to a simple asynchronous expectation. Users share availability once; the system enforces the norm.

Why behavioural engineering mattered:

Calendly normalised a new social behaviour. By reducing anxiety and ambiguity around time coordination, it created a predictable interaction pattern that required no explanation, training, or trust calibration. The automation was silent — and therefore widely accepted.

Takeaway:

Not every problem needs AI. Some need a rethinking of social behaviour and norms.

Failure & cautionary tales: when behavioural engineering was ignored

These cases matter more than the successes — because they show how AI fails even when technically strong.

Enterprise CRM chatbots — high capability, low adoption

What they did:

Many CRM platforms introduced AI chat assistants that generated summaries, suggested next steps, and auto-filled fields — all logically useful capabilities.

Why behavioural engineering failed:

There were no clear trust boundaries, no accountability when AI was wrong, and no reduction in verification effort. Instead of saving time, AI increased cognitive anxiety.

Behavioural outcome:

Users reverted to manual workflows. AI became a novelty rather than a habit. Fear of automation bias led to disengagement.

Key lesson:

If AI increases cognitive load or anxiety, users disengage — regardless of accuracy.

Auto-ML platforms — democratised AI, orphaned behaviour

What they did:

Auto-ML tools promised to make advanced modelling accessible to non-technical users by abstracting complexity behind automated pipelines.

Why behavioural engineering failed:

Business users distrusted opaque outputs. Data scientists resisted loss of control. Outputs lacked organisational legitimacy because no one clearly “owned” decisions.

Behavioural outcome:

No shared accountability, no institutional embedding, and no learning loop between humans and models.

Net result:

Technically impressive. Behaviourally orphaned.

Voice assistants in enterprise contexts — interface novelty, context failure

What they did:

Voice AI systems (e.g., enterprise voice assistants) attempted to translate consumer success into workplace productivity.

Why behavioural engineering failed:

Workplace norms demand auditability, shared artefacts, and traceability. Voice interactions produced none of these. Errors were socially costly, invisible, and hard to recover from.

Behavioural mismatch:

No persistent memory. No social visibility. No institutional trace.

Lesson:

Behavioural context matters more than interface novelty.

AI code review tools that over-automate

What they did:

Some AI tools attempted fully automated code reviews, bypassing human judgment and discussion.

Why behavioural engineering failed:

When edge cases slipped through, trust collapsed. Teams rejected black-box approvals—social learning — understanding why something was flagged — disappeared.

Behavioural outcome:

Mentorship was removed, shared understanding eroded, and risk aversion increased.

Net result:

Teams reverted to human review — or adopted tools that assist, not replace.

Synthesis: a behavioural failure pattern library

Across these failures, the same anti-patterns recur:

❌ AI introduced without behavioural scaffolding

❌ Automation without accountability

❌ Intelligence without explainability

❌ Speed without trust calibration

❌ Individual optimisation without organisational embedding

Meanwhile, winning products consistently:

✅ Preserve or strengthen rituals

✅ Create shared artefacts

✅ Engineer habit loops

✅ Allow conditional delegation

✅ Make AI socially legible

“AI fails not when models are weak — but when behaviour is left unmanaged.”

A playbook for product leaders — 10 concrete steps

Map the behavioural loop. For each AI feature, explicitly map triggers, actions, rewards, and investment (Hook) and annotate where trust & verification occur.

Prioritize durable context. Ask: Does this AI produce an artefact (document, template, annotation) that’s stored, shareable, and discoverable? Prioritize features that create persistent artefacts.

Design conditional delegation. Let users specify when the AI can act autonomously vs when it must ask. Track delegation outcomes to refine trust policies.

Measure behavioural outcomes, not just model metrics. Track habit measures (repeat rates, time-to-automaticity), calibration (how often users verify), and institutional adoption (team share, artefact reuse).

Implement progressive explainability. Provide lightweight explanations in the UI and deeper provenance on demand. Evaluate whether each explanation changes behaviour.

Create social scaffolding. Templates, playbooks, and community galleries turn private gains public and accelerate adoption (Notion model).

Protect against automation bias. Use UI cues, forced verification in risky operations, and training to reduce blind trust. Cite literature on automation bias and design countermeasures.

Ethical defaults & consent flows. Make data use transparent, provide clear opt-outs, and use safe defaults for actions with irreversible consequences.

Build composable interoperability — deliberately. Decide where to compete vs. where to interoperate. Composability can widen your footprint, but make sure interop surfaces feed your persistent memory or social artefacts to keep lock-in (rather than externalizing the artefact).

Run longitudinal pilots. Habit formation and trust calibration take weeks to months. So, it is advisable to run design experiments with realistic timelines (Lally et al.) and track automaticity and institutional uptake.

Ethics, regulation, and limits — when behavioural engineering becomes manipulation

Persuasive design and nudging can improve outcomes, but they can also cross ethical lines. The literature on persuasive technology warns product teams to be explicit about goals and consent; nudge theory offers frameworks but also critiques. Build guardrails: independent ethics review, transparent logging of nudges/automation, and “explain this to my manager” features for organizational accountability.

Measuring success: the right KPIs

Move beyond model accuracy to behavioural KPIs:

Adoption velocity (team activation, artefact reuse)

Automaticity index (proxy: % users who perform X without a prompt after T days)

Calibration score (ratio of verified vs accepted suggestions; false acceptance rate)

Task outcome delta (does human + AI outperform the best single agent for the task?)

Organizational embedding (templates shared, internal docs referencing outputs)

Use both quantitative experiments and qualitative interviews to surface trust and workflow friction.

Final note: why behavioural engineering is a defensibility strategy

Technology cycles make functionality fungible — today’s best-of-breed can be tomorrow’s library. Behavioural engineering creates social, cognitive, and institutional lock-in: the product becomes part of how people work, remember, and coordinate. That is the kind of defensibility that survives modularization and composability.

If you focus on three ingredients — persistent context, calibrated trust, and social artefacts — you’ll create AI features that are not only useful but integrated into company workflows and habits. Those behavioural ties, paired with sound ethics and rigorous measurement, are the strategic moat for AI-enabled SaaS.

Selected references & further reading

Scholarly & foundational

Lee, J. D., & See, K. A. (2004). Trust in Automation: Designing for Appropriate Reliance. Human Factors.

Lally, P., van Jaarsveld, C. H. M., Potts, H. W. W., & Wardle, J. (2010). How are habits formed: Modelling habit formation in the real world. European Journal of Social Psychology.

Doshi-Velez, F., & Kim, B. (2017). Towards a rigorous science of interpretable machine learning. arXiv.

Katz, M. L., & Shapiro, C. (1985). Network Externalities, Competition, and Compatibility. American Economic Review.

Mosier, K. L., & Skitka, L. J. (1996/1997). Automation bias and decision-making. (See reviews on automation bias).

Human–AI interaction & evaluation

Evaluating Human–AI Collaboration: A Review and Methodological Framework (2024).

Vaccaro, M. et al. (2024). When combinations of humans and AI are useful. Nature Human Behaviour (meta-analysis on human–AI systems).

Ethics & persuasive tech

Berdichevsky, D., & Neuenschwander, E. (1999). Toward an Ethics of Persuasive Technology. Communications of the ACM.

Thaler, R., & Sunstein, C. (2008). Nudge: Improving Decisions about Health, Wealth, and Happiness.

Practitioner & case analyses

Wow, this really shifts the focus! It's super smart to emphasize behavioural engineering over just model perfomance. So true about how users actually learn to trust and use systems.